Redesigning your ecommerce site or changing platforms is nerve racking. It’s easy to miss an important step and lose valuable organic search traffic and revenue.

I have assisted many ecommerce sites with redesigns and replatforms. Most go smoothly. Many actually increase SEO traffic. But a few are painful. I strive to learn from them.

When I look back at the successes versus the failures, there’s a clear pattern of corner cases and situations that are not part of the general recommendations in Google’s documentation or on SEO blogs.

In this article, I will share my battle scars — the cases that required extra steps to prevent disaster or to quickly recover after the fact.

First, I will summarize the standard recommendations from Google and from SEO practitioners.

Standard Recommendations

Comprehensive 301 redirect maps. This is the most critical step when a new design or platform requires URL changes. You must map every old URL to the equivalent new one. Do not just map the top pages. Map every page.

Consistent SEO metadata. If you have quality metadata in the existing site — titles, meta descriptions, H1 tags — make sure you at least import them into the new platform. There is no excuse to have pages with no meta descriptions in the new site when they existed in the old one.

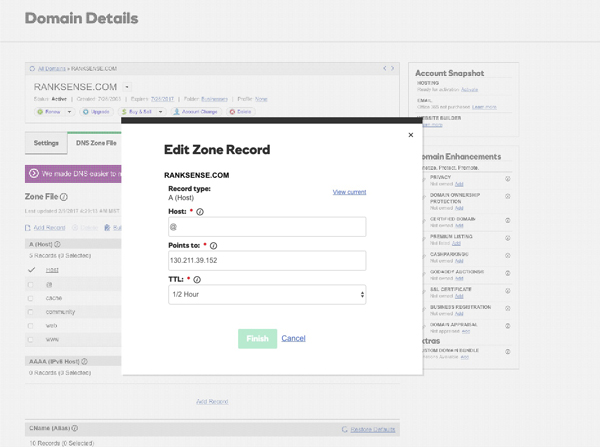

Lower DNS TTL. Anything can go wrong during a platform change. Reverting back DNS changes can be painfully slow. Reduce this risk by setting up the main DNS record for your site to the lowest TTL — time to live — amount of time.

If, during a replatform, you need to revert back to your old site, setting the domain’s DNS records for a low time to live — TTL — will force ISPs to recognize the change back to the old site more quickly.

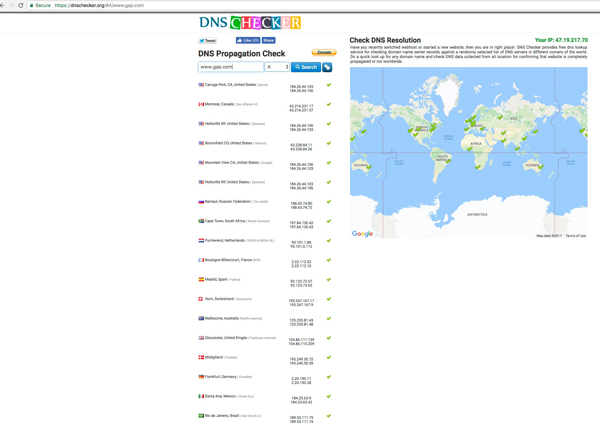

Check DNS propagation. In addition to making sure your DNS switchover happens quickly, and you can go back to the previous site quickly, keep track of how fast your new IP is updated across the web. DNS Checker is a handy, free tool to help you do this.

DNS Tracker is a good tool to keep track of how fast your new IP is updated across the web

Update XML sitemaps and robots.txt. Your XML sitemaps and robots.txt file need to be updated to reflect the new design or platform URL conventions.

Eliminate redirect chains. If you have redirect maps from previous redesigns or migrations, it is not a good idea to chain those redirects to the new ones. It is better to connect the source URLs in the old maps to the final destinations in the new platform.

Monitor crawl stats and crawl errors. After you launch it is possible that you will still see new 404 errors in Google Search Console. It is also possible to see big spikes in the crawl activity. It is normal to see that for the first few days, but if after a week you still see them, you might have infinite crawl spaces, which should be fixed.

Move sections individually. It is a lot less risky to keep the new and old sites live at the same time, with the new site invisible and inaccessible to search robots. Then, you redirect and open sections at a time, starting with the lowest risk ones. This practice is particularly critical for large retailers.

Not-taught-in-school Recommendations

I’ve learned some of the next recommendations the hard way: I first followed best practices, then found corner cases that created all sorts of issues.

Many site owners try to improve seemingly everything during a new site migration or redesign, such as a fancy navigation, infinite scrolling, and improved titles and meta descriptions.

But in my experience, the less you change during a redesign or migration, the better. Phase in the biggest changes. For example, first complete the redesign or replatform, then change title tags, then make navigation changes.

The main benefit of this approach is that if there is a performance hit, it is easier to isolate the cause and revert back.

What follows are other recommendations I’ve learned from experience.

Don’t change title tags before launching. The problem with changing title tags is that they affect rankings. If you change title tags across the site you will likely see extensive ranking shifts. In some cases you could increase rankings. But in other cases, you could lose big time. It is not worth the risk.

A couple of weeks after the redesign or migration, and if there are no issues, is a better time to update title tags. But even then, change titles in batches of pages. Keep the title changes that perform better, and roll back the ones that don’t.

Map all URLs from Google Analytics. I often see the case where redirect maps include only the top performing pages, or in the best cases include only the URLs in the XML sitemaps. But Googlebot has a long memory. It crawls and ranks pages that are no longer linked from anywhere in the site but are linked from third party sites. If you forget to map these pages, you would lose valuable traffic.

A simple way to ensure all pages are redirected is to retrieve them from Google Analytics.

Make sure redirect maps preserve redundant parameters. Developers frequently look for shortcuts to get more done in less time.

But you don’t want your developers taking shortcuts with your redirect maps. Redirect rules need to be generic enough so they account for any possible variations of the source URLs. In particular, they need to account for redundant parameters you haven’t seen.

The following rewrite is incorrect because it removes the URL parameters (including paid search tracking).

RewriteEngine on

RewriteRule ^p/1002/(.*) http://www.newstore.com/product/1002/? [L,R=301]

—

This rewrite is correct as it preserves the URL parameters.

RewriteEngine on

RewriteRule ^p/1002/(.*) http://www.newstore.com/product/1002/ [L,R=301]

Don’t consolidate large groups of pages. During redesigns or replatforms, ecommerce merchants often want to eliminate some sections of their stores, or consolidate sections, to make it easier for visitors to complete their purchases.

It’s wrong to assume that 301 redirecting sections or pages removed or consolidated will improve the traffic and rankings. While the consolidated pages generally receive a boost in page reputation and perform better than before, they generally lack the content of the consolidated pages, and don’t rank for the same terms.

If you need to consolidate pages, do it after the redesign or migration, and also consider opening faceted navigation pages that can make up for the consolidated ones.

Don’t remove too much content. Another important lesson I’ve learned is to keep the overall amount of unique content on your ecommerce site the same after the replatform.

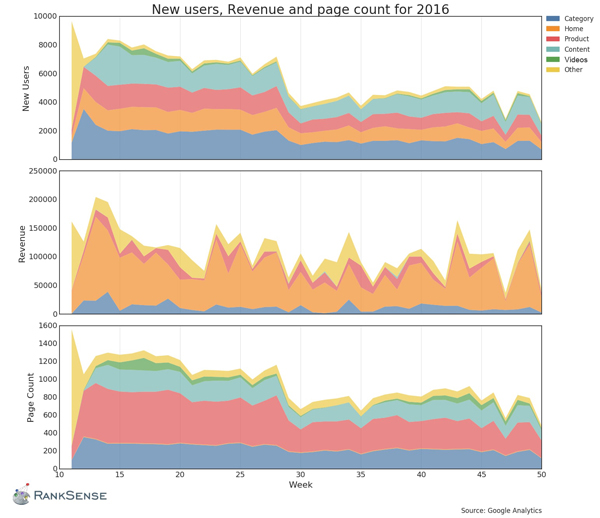

The graphs below show a post-migration analysis I performed to understand why the site’s traffic and revenue were down, and to find the problematic pages.

A post migration analysis performed to understand why the traffic and revenue were down.

Note on the graphs above that products, content, and the home page were performing similarly, but the category pages were performing at half their previous levels. We compared the total number of pages and the word counts before and after and saw huge reductions in the amount of content.

Wait to move to https. I use to recommend to take the redesign or replatform opportunity to move to https. However, recent migrations have been far more complicated due to this change. So, I now recommend to move to https as a separate step after the migration.

The move to https requires as much careful planning as a redesign and replatform. I will cover it in detail in my next article.

Don’t forget URL parameter settings. Your new platform is likely to require completely new URL parameter settings in Google Search Console. These parameters guarantee a smooth Googlebot crawling experience. It is wise to configure new URL parameters upfront before (or at the time of) the migration, while keeping the old ones intact.

Wait to launch infinite scrolling. SEO-friendly infinite scrolling requires a complicated implementation that also needs to support standard pagination. If you launch without an SEO-friendly infinite scroll, you will end up with paginated category pages not crawled or indexed.

Consider completing the replatform without infinite scrolling, then add the feature in an SEO-friendly way later.

Be aware of JavaScript rendered pages. Sophisticated ecommerce systems sometimes render product listing pages using JavaScript in a way that Google doesn’t index. The challenging part is that you need to use Search Console’s Fetch and Render to check your pages for this, but Search Console only works with public sites, and your redesign or replatform would be in a staging environment.

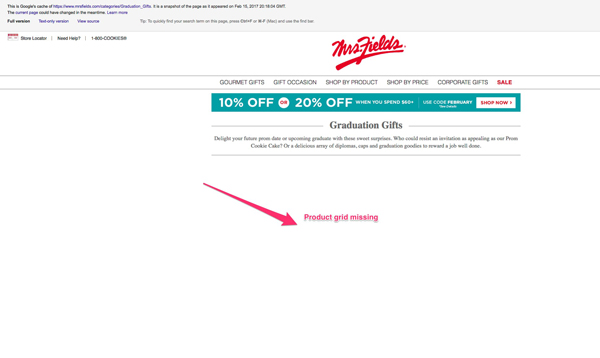

A simple shortcut is to ask your ecommerce platform to provide examples of other public sites running the system, and then use Google’s cache command to see if there are issues.

Ask your ecommerce platform to provide examples of other sites running the system, and then use Google’s cache command to see if there are issues. In this case, a page grid is missing.

—

Another solution is to set up an example test page to open publicly and check with Search Console’s Fetch and Render feature.

—

Set up an example test page you can open publicly and check with Search Console’s Fetch and Render feature.

Migrate old redirects and redirect configurations. Some development teams at retailers I work with discard old redirect mapping files after the migrations. But the problem is that redirects are permanent. Google never forgets them. It continues to re-crawl even old 404 or 410 URLs in case they ever come back.

I experienced this issue last year during a replatform. Google generated over 20,000 404 errors after the replatform, making the identification of the actual post migration 404s nearly impossible. The issue was complicated further because Search Console only allows you to pull 1,000 404 URLs at a time, and the new platform vendor kept web server logs for only 30 days.

Note that Search Console used to allow you to pull all errors using its API, but Google changed it and offers a limited version of that capability.

Keep 90 days worth of web server logs. Search Console is a fantastic, free SEO tool. But when it comes to narrowing down critical SEO issues, web server logs are your friend. You can find any URLs crawled or not crawled by Google and other search engines, and also identify any major problems.

In the case where you face a lot of unexpected 404 errors after a migration, a comprehensive list of URLs visited by Google before and after the migration are crucial to complete your redirect maps more quickly.

Map image and video URLs. I often see only web page URLs in redirect maps. But if you check in Search Analytics in Search Console you will likely find quite a good amount of traffic coming from images. Image and video URLs often get links too. So it is a good idea to create 301 redirects to new image and video URLs in the replatform.

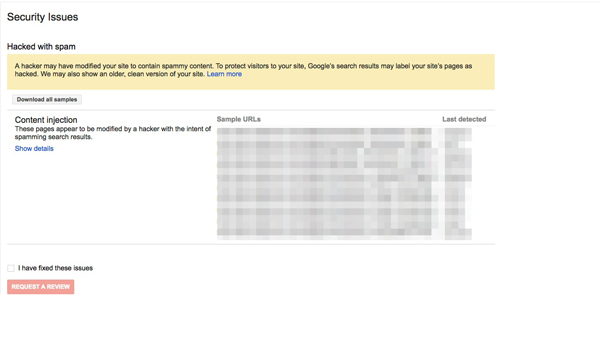

Avoid fancy analytics implementations. This is probably the most obscure issue I have seen after a replatform. Google labeled the site as hacked with spam content injected. But when we looked closer it was no hack, but an advanced Google Analytics implementation. The developers used hidden div elements for some custom properties instead of a more standard use of JavaScript variables. The hidden div elements likely presented Googlebot with a similar pattern as hidden spam text.

In one instance, the developers used hidden div elements for some custom properties instead of a more standard use of JavaScript variables.

Double check analytics setup. Sometimes a post-migration performance appears to be worse or better than expected due to a tracking issue. Some pages might be missing Google Analytics tags, or have duplicate tags, or there are missing settings that consolidate third party shopping cart metrics, so that the traffic is labeled as referrals.

Other issues I’ve encountered are paid search traffic incorrectly labeled as organic because the redirect maps are stripping out the gclid used for tagging AdWords campaigns.

It is wise to crawl the new site with a tool such as Screaming Frog to ensure all analytics tags are in place and correct.

Monitor logs post launch. I require clients’ development teams to post web server logs daily to an SFTP site or an Amazon S3 bucket so I can pull them and monitor Googlebot’s activity faster and with better resolution than in Google Search Console.

For example, we launched a new site where pages only show other language versions when the request has the ACCEPT-LANGUAGE header, which Googlebot supposedly supports. But, after many weeks we haven’t seen a Googlebot fetch pages using alternative languages.

Recommended

Remembering Hamlet Batista

February 8, 2021

Conclusion

My lessons in managing organic search traffic after redesigns or replatforms won’t stop here. This list will grow with other migration battles. If you have experiences that I have not covered, please share them in the comments.