If predictions are correct, AI agents can soon do “real” work, such as adjusting advertising budgets, updating product listings, and authorizing refunds.

But is there a security risk? Before it can delegate that level of control, a business must ensure the agent will behave predictably and safely.

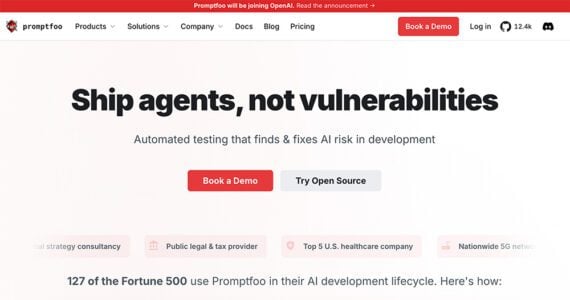

That concern helps explain why OpenAI has announced plans to acquire Promptfoo, a startup that develops tools for testing and securing artificial intelligence applications.

OpenAI’s plan to acquire Promptfoo may signal how enterprise AI systems test for prompt vulnerabilities.

Testing AI Systems

Promptfoo began as an open-source framework for developers to evaluate prompts and AI responses. The platform evolved into a testing environment, enabling engineers to run thousands of simulated AI interactions before releasing an application or agent.

Those tests can expose weaknesses, including:

- Opportunities for prompt injection attacks,

- Agents using tools in unsafe ways,

- Unintended API calls,

- Data leakage through responses.

Promptfoo is akin to an AI quality-assurance framework. Traditional software testing verifies code with known outcomes. Yet AI systems behave differently. Developers need tools that can probe many possible inputs and edge cases. Promptfoo automates that process.

AI Agents

The Promptfoo acquisition also implies a shift in how companies deploy AI agents and applications.

Enterprise deployments thus far have focused on chatbots and knowledge assistants. Many rely on retrieval-augmented generation, in which models answer questions by retrieving information from a database.

More recently, developers have begun building AI agents that can plan tasks, call external tools, and execute multi-step workflows. Examples include:

- Analyze advertising performance and adjust campaign budgets,

- Manage customer-service workflows,

- Update product listings or pricing,

- Run marketing or analytics queries.

The agents interact directly with CRMs, inventory databases, and ecommerce platforms. That capability expands what an AI agent can do. It also increases the risks.

Industry Shift

OpenAI’s acquisition is not the only signal that AI agents are increasingly prominent, or that businesses must focus on AI security.

Meta recently acquired Moltbook, a social network of sorts for autonomous AI agents. The company’s technology enables agents to communicate and coordinate through a shared system.

Moltbook is an early look at how AI agents communicate.

Taken together, the actions of OpenAI and Meta highlight different parts of the emerging agent ecosystem.

Meta’s acquisition focuses on enabling AI agents to interact with one another, while OpenAI’s addresses their behavior and safety.

The combination suggests that large tech companies anticipate software agents that interact with humans and other agents.

Security

An AI chatbot that produces an incorrect answer is typically an inconvenience‚ a hallucination.

An AI agent with system access can create real problems. From a prompt-injection attack, for example, an agent could:

- Share sensitive customer information,

- Trigger unauthorized or fraudulent refunds,

- Modify pricing or inventory,

- Expose proprietary data to other agents.

Businesses, therefore, need guardrails that prevent manipulation and unpredictability.

Promptfoo appears to provide that capability. By integrating testing tools directly into its enterprise AI platform, OpenAI can help developers identify vulnerabilities before deploying agents in production environments.

Fraud

Security extends beyond internal systems to include fraud prevention.

Jeff Otto, chief marketing officer at Riskified, a fraud-prevention platform, said the rise of AI agents could create software systems that interact with one another (similar to Moltbook).

“Meta’s decision to house a social network for AI agents within Superintelligence Labs is a strong signal that agentic commerce is moving from theory to reality,” Otto said. “Moltbook’s agents were built on the OpenClaw framework, which enables autonomous agents to interact, coordinate, and potentially transact on behalf of human users.”

If that vision develops, Otto said, ecommerce fraud detection will need to evolve as well.

“That shift sets the stage for a high-stakes machine-versus-machine environment,” he said. “For retailers, the traditional rules-based fraud playbook is no longer sufficient. When bots are the ones clicking ‘buy,’ merchants need a defense layer that can distinguish between a legitimate AI assistant and a malicious agent in milliseconds.”

Agentic Commerce

With their agent-related acquisitions, OpenAI and Meta are presumably planning for what’s next.

If that future includes agentic commerce, merchants must consider an environment in which software agents — not just humans — do the shopping.