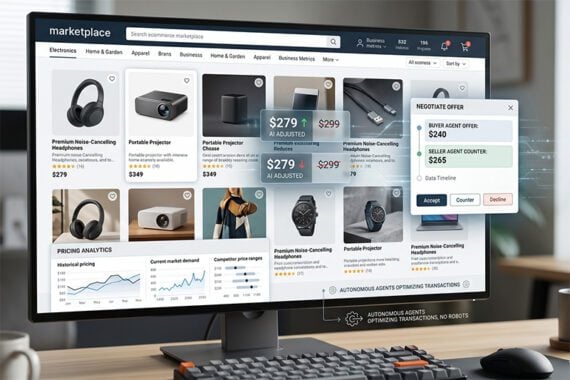

A recent Anthropic experiment may offer a glimpse at how agentic commerce could work in two-sided marketplaces, where buyers and sellers negotiate prices.

Called “Project Deal,” the test compared two large language models to determine whether stronger AI systems would gain an advantage in autonomous marketplaces. While the experiment does not necessarily predict how AI agents would negotiate with humans in real-world commerce, it revealed both model differences and user blindness to poorer economic outcomes.

Agentic commerce could take many forms, including something dynamic like Project Deal.

Project Deal

Anthropic conducted the experiment in an internal Slack employee marketplace. Sixty-nine staffers allowed Claude AI agents to negotiate the purchase and sale of real items on their behalf, including books, sporting goods, and household products.

Once the marketplace opened, the agents operated autonomously, proposing prices, responding to counteroffers, and closing deals without human approval.

Across four separate marketplace runs, the agents completed 186 transactions totaling about $4,000. A subsequent regression study yielded 782 transactions with values above $15,000.

Anthropic intentionally varied the capability of the participating AI models, using the more advanced Opus for some employees and the smaller Haiku for others.

The company noted that the experiment’s design reflects growing interest among economists and AI researchers in what some call “agentic interactions,” in which AI systems move beyond information retrieval and begin acting as economic participants.

Economic Advantage

During the Project Deal test, Anthropic found that the stronger Opus model generally achieved better economic outcomes than the smaller Haiku model, but not necessarily because it completed significantly more transactions.

Instead, the differences appeared primarily in negotiation performance.

According to Anthropic’s data, Opus agents earned $2.68 more per transaction when selling items and paid $2.45 less when buying items. The pricing differences were relatively small in dollar terms, but meaningful relative to the experiment’s median transaction price of about $12.

Anthropic also conducted a narrower paired-item comparison. Looking only at identical items sold by different models across runs, Opus sellers earned an average of $3.64 more for the same item than sellers represented by the weaker Haiku model.

In other words, more capable models could be a significant competitive advantage in the marketplace. The company was careful to mention that Project Deal “doesn’t reflect how we think agents should be deployed in the real world.”

Fixed Price

Does the outcome suggest that online sellers deploying “better” AI seller agents could earn significantly more in some marketplaces?

The answer might be no.

Today, most ecommerce transactions are fixed-price purchases rather than negotiations. So agentic commerce, wherein AI agents shop on behalf of folks, might not be applicable.

On the other hand, many two-sided marketplaces still include elements of bargaining, price optimization, or dynamic pricing.

Examples include:

- eBay offers,

- Facebook Marketplace negotiations,

- Craigslist transactions,

- Wholesale sourcing,

- Advertising auctions,

- Freight marketplaces,

- Procurement platforms.

In these kinds of exchanges, a relatively stronger AI system could theoretically produce measurable economic advantages over weaker systems or human negotiators.

If agentic exchanges expand, model capability itself could become a form of competitive advantage similar to logistics efficiency, marketplace data access, or advertising sophistication.

Dollars not Deals

While the economic advantage gained from AI agents in the Project Deal experiment was significant, it was also nuanced. For example, the statistical differences between Opus and Haiku in deal completion were relatively small, according to Anthropic.

Both models, if you will, could close the sale. This is worth mentioning because, in the near future, merchants may evaluate AI agents much like they evaluate advertising campaigns or marketplace performance today. Instead of focusing solely on completed transactions, sellers could begin measuring:

- Average negotiated selling price,

- Procurement savings,

- Margin improvement,

- Pricing consistency,

- Revenue per transaction.

User Blindness

Perhaps the most surprising finding from Project Deal was not the difference between the AI models themselves, but how humans responded to those differences.

The human participants represented by the weaker Haiku model often reported levels of satisfaction and fairness similar to those using the stronger Opus model, despite achieving measurably worse economic outcomes, according to Anthropic.

In other words, many folks did not recognize that their AI agent had negotiated less effectively on their behalf.

That finding could eventually become important in ecommerce and marketplace environments where AI agents act semi-autonomously for buyers or sellers.

For example, a merchant deploying an AI procurement agent might not immediately recognize that a weaker model consistently pays slightly higher supplier costs. Similarly, a marketplace seller using a less capable negotiation agent might unknowingly accept systematically worse pricing outcomes.

Over time, even relatively small pricing disadvantages could compound across thousands of transactions, advertising purchases, or sourcing agreements.

Dealmakers

If nothing else, Anthropic’s Project Deal demonstrated that AI agents could buy and sell in a narrowly focused marketplace.

That small success should certainly have the industry thinking about what happens when AI agents are, in fact, buying and selling for folks.